Research Introduction

Disrect Object Manipulation Using Body Action for High Presence VR Space

Abstract

Immersive Virtual Reality (VR) space enables users to feel high visual presence of virtual world.

However, there are few foregoing researches realizing natural interactions between users and

virtual world using intuitive body actions. If VR space does not respond to user's action

as if in the real world, users cannot feel VR space have high presence and reality.

This paper presents a high-realistic virtual environment system for realizing high presence and

natural operation. This system enables users to manipulate virtual objects directly objects

using their body actions. The system utilizes an optical motion capture system

to measure the multiple positions of usersÅf body parts without interrupting users' free actions

in interacting with virtual objects. In order to recognize the body motions as the actions

which users intend, the authors classify the body actions into three types: a comprehensive action,

local-near action and local-far action. These actions are recognized

as the intentional body action of a user. The results of some evaluation experiments and

a usability test show that a direct manipulation using usersÅf body actions gives users

a quite effective manipulation for immersive virtual environment.

Therefore, the proposed operating environment enables users to manipulate a virtual world

with high visual presence in the same manner as in real world.

Proposed System

In order to realize interaction using body actions in an immersive virtual environment, during measurement of body actions the behaviors of users must be kept free and all body actions should be measured. Our approach adopts an optical motion capture system using multiple infrared cameras. The optical motion capture system can measure each 3D position of reflective markers attached to a user. The optical motion capture system has two advantages. First, 3D positions of any body parts can be measured from attached markers easier and steadier than the way using visible image processing, though the lighting condition and background around users change all the time. Second, both comprehensive action and local action can be measured and recognized at the same time, because it can measure not only the immersive space in a wide range to enclose the area which users can move totally but also the position of markers locally.

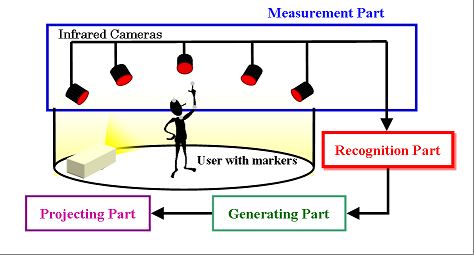

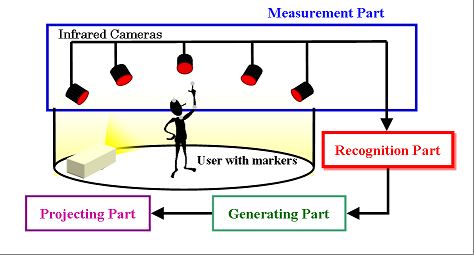

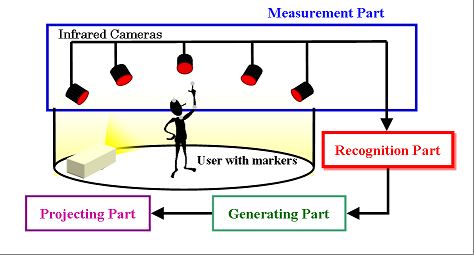

The immersive environment for direct object manipulation using body actions consists of four components (Figure 1).

-Part I : Measurement of markers

-Part II :Recognition of userÅfs actions

-Part III : Generation of the virtual scene

-Part IV : Projection of the virtual image to users

To begin with, a user enters the space which has a large screen with some reflective markers at the prescribed body parts. The user can move freely there. In measurement part, the 3D position of the markers is measured by motion capture system with multiple infrared cameras. Next, in recognition part, the each position of markers is recognized as a position of corresponding body part, for instance, head, shoulder, elbow and hand, and the body actions toward virtual objects are determined. Our approach proposes that body actions to reaches for virtual objects are classified into several basic actions and the intensions of the user are distinguished by combining these basic actions correctly. The method of recognizing body actions is subsequently explained in more detail. In the part of generating the virtual environment, the virtual world is reconstructed in efficient manners according to the actions of the user. Finally, the visual feedback is provided for users by projecting to the surrounding screen.

System Overview

System Overview

For realizing the interaction using body actions of users, the body actions must be classified suitably. Representative actions are the following.

(a) Observing an environment by moving and changing the view point around the virtual space.

(b) Operating presented virtual objects by userÅfs hands such as catching, moving, replacing and so on.

(c) Selecting the virtual objects situated away from the user by a pointing action.

These actions can be categorized into two groups; one is called the comprehensive actions (a), another is called the local actions (b) and (c). The comprehensive actions are the movement of userÅfs entire body and recognized relative locations to virtual space. The authors assume that they are made of two kinds of information, the position and the direction of the user. In contrast, the local actions are approaches for virtual objects by userÅfs arms and hands locally. When we operate the local objects in the real world, most of the operations are done by each or both hands. The important factor for high presence immersive environment is to realize the interactions between the user and virtual objects using hands naturally as well as real world. Therefore to apply these metaphors to the interactions in the virtual world is effective.

With regard to the local actions, the distance to objects must be considered. Though we cannot operate objects located away in real world, we can if they are virtual objects. If objects are located within the range where user can reaches, such an operation is called local-near action (Category (b)). And the case that the distance to objects is far called local-far action, which is dealt with as the particular interaction in virtual world (Category (c)).

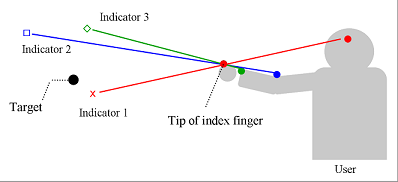

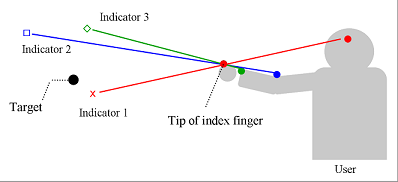

For the local-far action it is absolutely essential to recognition which object the user tries to operate. Since the user extends his/her arm and reaches his/her hand to targets, the direction of operation is approximated from the pointing direction. Previous researches have reported the several methods that compute the direction of pointing from body parts of the user. However, in most of the methods the direction is calculated by the straight line that connects pre-established body parts and a cursor is shown for indication of the pointing direction. As a result, because of the mismatch between the direction of userÅfs intention and the calculated direction, user must move the cursor and cannot operate by natural pointing action. In this system the direction matching the userÅfs intention is estimated by using multiple body parts in order to realize the direct operation to faraway objects.

If the user tries to interact with some objects located faraway, the direction of userÅfs approach must be recognized. The straight line connects the position of index finger and a point existing in the body of pointing person, which called the basic point. However, the fact that the basic point differs greatly by each individual and each position of object is well-known. Therefore the basic point cannot be determined uniquely and to recognize the direction of approach is difficult.

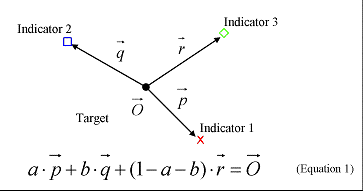

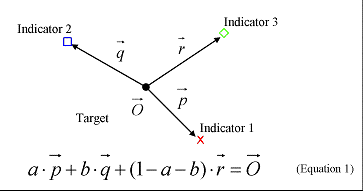

This system proposes the method which estimates the direction of pointing from several indicators. The indicators are the straight lines that connect the tip of index finger and the body parts which pertain to the pointing action. In this case we use the three points, a center of eyes, a right elbow and the inside of a right wrist (Figure 2-i) and in order to measure these 3D positions the reflective markers are attached at each parts. The direction is determined by computing the weight for these indicators according to Equation 1 (Figure 2-ii). is the position vector of target and , , are the position vectors from to the nearest point on each indicator. The a and b are weights of Indicator 1 and 2, which are calculated by Equation 1.

Method for estimating the direction of pointing

Method for estimating the direction of pointing

>>Experiment

Back to Research Top

Back to Top Page